How to use A/B testing in Recapture

What is A/B Testing?

A/B testing is a way to take one campaign and send two different kinds of emails to different customers and see which one has a bigger impact. A/B testing allows you to take a base idea for a campaign and then slowly improve on it over time by making and testing small changes, and then measuring the impact of those changes.

If you have a successful email campaign today, you can use A/B testing to find out if you can make it better!

Terminology You Need to Know

A/B testing uses some new words you may not be familiar with, so we'll define them here to make sure you're clear on what we mean in Recapture by these words.

- Variant: This is one of the two "tested" emails. Either Variant A or Variant B. (Thus, the term "A/B testing")

- Test Period: The length of time a test runs. In Recapture, we measure this by email sends. That's the most reliable way to get a good set of data for your test.

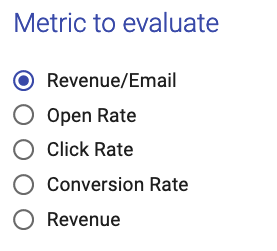

- Test Metric: Basically, what is the measure of a winning test? The test metric defines how we look at the winner vs. loser. Metrics we use include: open rate, click rate, conversion rate, revenue, and revenue per email. You can pick only one per test.

- Winner: When a Test Period is over, we look at the selected Test Metric for Variant A and Variant B. The Variant with the higher Test Metric is the Winner.

- Promotion: A Winner is promoted to be your final campaign in Recapture once the Test Period is over and a Winner has been selected.

Why do I want to A/B test?

A/B testing is a great way to determine if your emails are making the biggest impact they possibly can with your customers. By A/B testing things like:

- Different subject lines

- Different email content/creatives

- Different discounts

You can get a better idea of what your customers like and react well to, and what falls flat with them. A/B testing optimizes your email content in an automated way with Recapture so you can test a change (e.g. a new subject), decide what metric you want to measure (e.g. click rate) and then run a test for 200 emails and see which one wins (A or B?) and then automatically promote the winner to keep sending.

What do you support in A/B testing?

How do I configure A/B tests in Recapture?

First, access A/B testing from the Campaigns area of your email campaign. Here is an example using Abandoned Carts:

Next, you can create an A/B test by clicking this button:

When you create an A/B test, you have several things to configure:

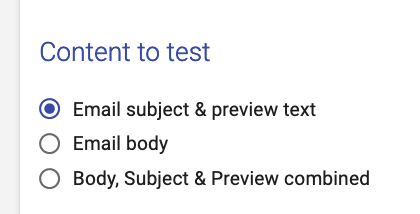

- What you want to test (subject, body, everything)

- What you want to measure (to determine what test variant "wins")

- How many emails to send for the test (per variant, to ensure you have enough data to make a solid conclusion)

- The content of the A and B variants

The screen you use looks like this:

You, of course, have to name the test. We recommend something useful like "A/B Test of <X>" where <X> is the bit you're changing, like: "A/B test of New Image Creative 09-17-22". Using descriptive names helps when you run a lot of tests and want to know precisely what was changed.

As for the other settings, we'll go over them in detail here:

Setting What to Test

Email Subject and Preview text are what you first see in any email (especially in mobile). You can select this option to make changes to JUST these parts of your email.

Email Subject and Preview text are what you first see in any email (especially in mobile). You can select this option to make changes to JUST these parts of your email.

Setting What to Measure

You can only pick ONE metric to test against. This is important because it avoids confusion with mixed data results. Clear tests result in clear outcomes.

You can only pick ONE metric to test against. This is important because it avoids confusion with mixed data results. Clear tests result in clear outcomes.

Setting the Variants to Send

In order to have a good set of data to determine the winner, Recapture must send enough email to make it possible for us to judge the winner appropriately on the Test Metric.

To do that, we recommend that you send at least 100 emails per variant (so that's 100 for Variant A and 100 for Variant B, or a total of 200 emails for that campaign.

You can set that here:

Setting the Cool Down Period

The Cool Down period is what happens when Recapture has sent all of the emails for the test. The Cool Down period gives the "last recipients" of the emails some time to interact with them before you end the test. It's best explained by example.

Suppose you have an A/B test for an email that is sent 1 day after the cart is abandoned. You also send a followup email 3 days after that. If you A/B test the "1 day" email, then you will want a cool down period between 1-2 days in order to give customers who get that email time to click on it before they get another email from you.

The Cool Down period is set here:

Recapture recommends setting the Cool Down period to be the Time of The Email Campaign + Time You Think Customers Will Interact with It

Here are some guidelines on what to choose:

- If your tested email is within 1 hour and the next email goes out at 1 day, give the customers a Cool Down of 8-16 hours.

- If your tested email is within 1 day and the next email goes out at 1 week, give the customers a Cool Down of 2-5 days.

- If your tested email is within 1 week and there is no next email, give the customers a Cool Down of 4-7 days.

Updating the Content for the Variants

Depending on what you picked for the Content to Test section, this final section will update to your test needs.

If you picked Subject & Preview, you can change the values for those two fields in both Variants:

We recommend that you leave Variant A as your "control" (the part of the test that doesn't change) and Variant B to have whatever updates you'd like to test.

If you picked Body, you'll be able to edit the body of each Variant:

Running an A/B Test

Once you configure the test, in the upper right area of the screen, you can either save it as a draft (if you need more time to work on the Variants) or just start the test right away:

Until your test sends the configured number of emails (in this case, 200), you will see a progress bar showing how far you are from completing the test. Your statistics for sends, opens, conversions, clicks and revenue will update regularly.

When a test reaches the end of the sends, it will go into the Cool Down period as defined above.

At the end of the Cool Down period, a Winner will be selected. The Winner will show on your test with a small trophy icon like this:

Can I stop a test in progress?

Yes. To do that, hover your mouse over the A/B test and then click on Settings for a test that is running:

That will stop the existing test.

Can I run multiple tests at once?

You can test multiple campaigns at the same time, if you like.

You can only run one test per campaign at a time. When a test is complete, you can create another test for that same campaign.